I’ve had the Beach Boys Good Vibrations stuck in my head for about a month. As the son of Boomers, I cannot help but hear the refrain when folks ask me what I’ve been up to. “Vibing” is my answer, and there’s a smile on my face. I had been poking around, fiddling with, and reading about advances in the various AI tools for a while, but after the holidays I decided to get hands on with a concrete project. I’ve had a public policy idea in my head for at least the last six years and despite sharing it with a few folks around town, it hadn’t caught on. This is not a post about that idea. If you want to dig in, you can go check out the project on GitHub: https://github.com/joburke1/tdranalysis. This is a post about my experience getting from zero to version 1 using Claude code.

File -> New Project

As a product manager, I’ve spent plenty of time learning about various layers of the tech stack, new technologies, old technologies, how and when to use them, and how to compose them into products and services to meet user needs. But I’ve never actually been “hands on keyboard” for anything that wasn’t a tutorial or pre-baked project designed to help me learn. What I wanted to do with this project was take a concept from inception to version 1 to stress test the marketing claims of the AI providers.

Having had a few false starts with some of the free versions of the apps, I started by upgrading myself to a Claude pro account, and grabbing a blank sheet of paper. Diving right into code with the older free models had given me an unworkable app with plenty of tech debt last year, so this time I knew I needed access to the latest models and a plan.

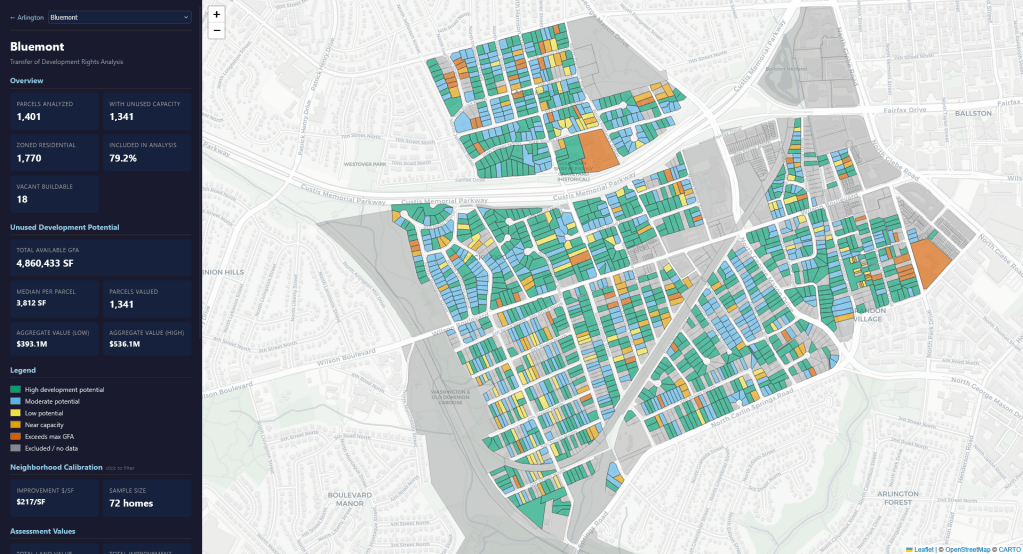

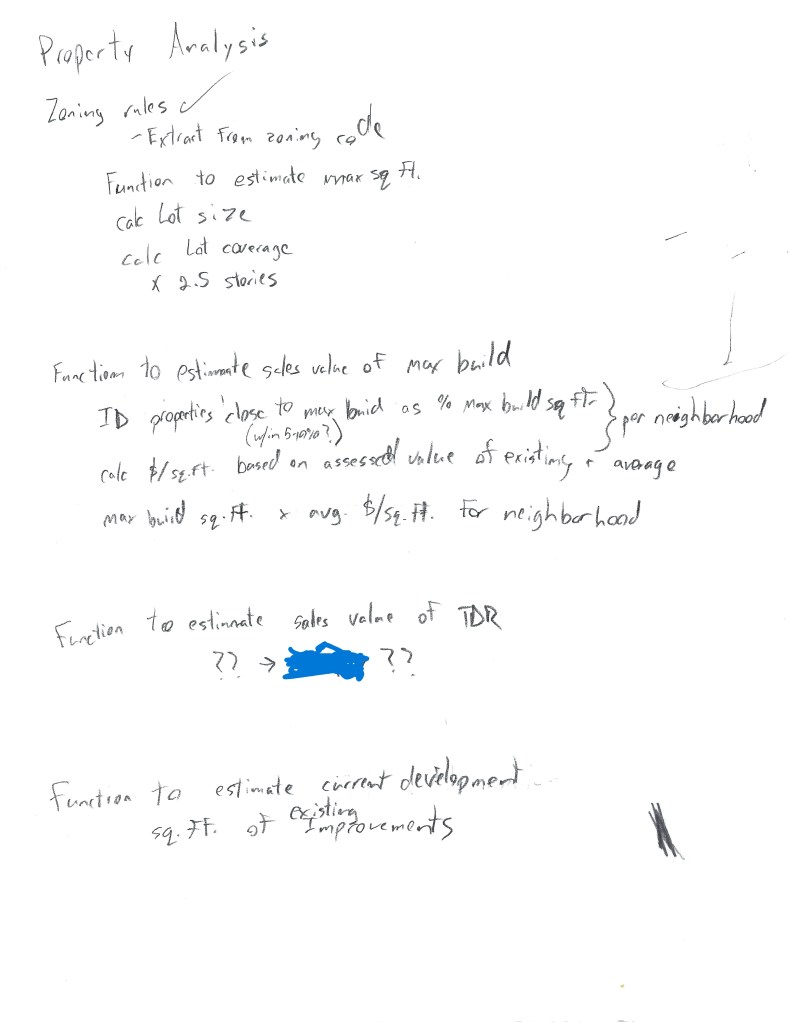

The question I was investigating required building a parcel-level analysis of unused development capacity across Arlington County (VA)’s residential zoned properties. The goal was estimating how much unused development capacity exists in residential neighborhoods and what that capacity might be worth to commercial developers as transferable bonus density. The first step was to build context.

Plan before you code

I created a project in cluade.ai as a sandbox to house my project-specific conversations and artifacts. Throughout the project I would bounce between this sandbox, and my Claude code plug in in VS Code. Working through the conceptual framework of the project in this sandbox with Claude, specifically using its research and sythesis capabilities, allowed me to quickly iterate through key concepts of the project and develop project artifacts that I could use as authoritative references for humans, as well as key context for the coding portion of the work. I would start with what I thought was a good prompt, it would go off in kinda the right direction, and I would correct it. Wash, rinse, repeat until I had a reliable artifact I could import into my code repository as context for the coding portion of the work.

On my blank sheet of paper, I had broken down the project into logical functions. Once I created artifacts extracting the calculation rules from the zoning regulations, I was able to work through each function and produce a map of my neighborhood rather quickly, which was quite satisfying.

Test and Iterate

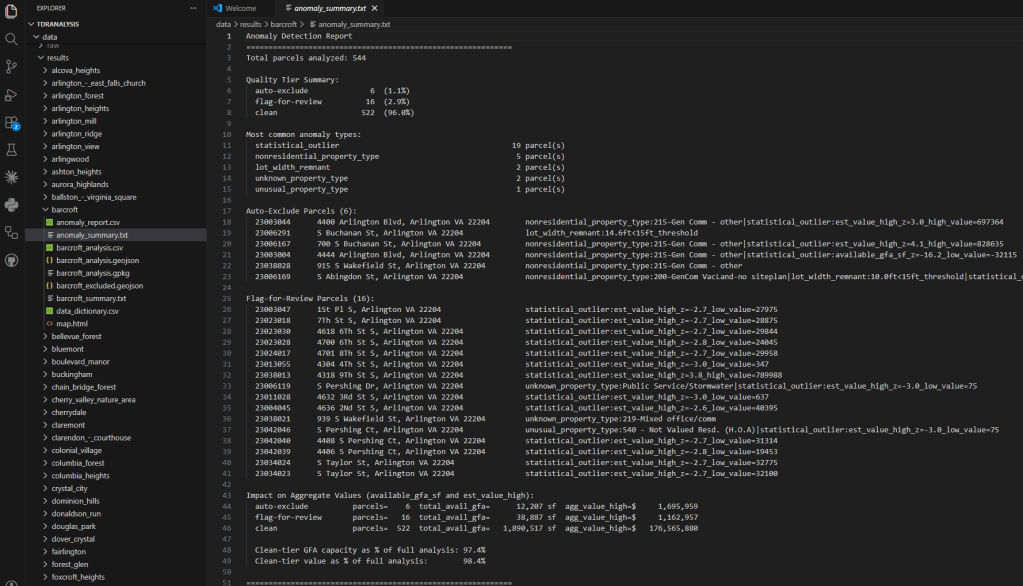

Of course, it was wrong, which was frustrating. But it wasn’t obviously wrong, or obviously broken. It was wrong and broken in subtle ways. Instead of spending days or weeks trying to trace through the code and hoping I understood it, I was able to quickly build validation tools that exposed the steps of the analysis pipeline along the way. I’m perfectly capable of doing the calculations involved on a single parcel, so spot checking seemed like a workable approach. This is where humans can still add a lot of value to the paired AI-human coding team. The years of domain knowledge gathered by the human (in this case, knowledge about my neighborhood) allows for rapid validation and iteration on the results. Pairing with Claude code allowed me to build tooling to support this validation and iteration just as rapidly as I had built the initial analysis pipeline. I ended up with several project specific skills and an anomaly detection and resolution workflow that allowed me to quickly surface and resolve edge cases that were not handled in the initial analysis logic.

A wide angle lense

Anyone who has worked on a cross-functional team knows that someone (usually the product manager) needs to keep the big picture in mind as the team works through the details. When you’re flying solo this context switching can be difficult. When I needed a check point, I would bounce back to my project sandbox and ask Claude to do an adversarial assessment of the project and identify weaknesses. Most of them I anticipated based on where I was in the project, but it helped me validate progress, and check things off my list without spending the time and energy trying to remember every small piece of the project I had already worked through. One area I hadn’t thought of in advance was the demand side of the valuation model.

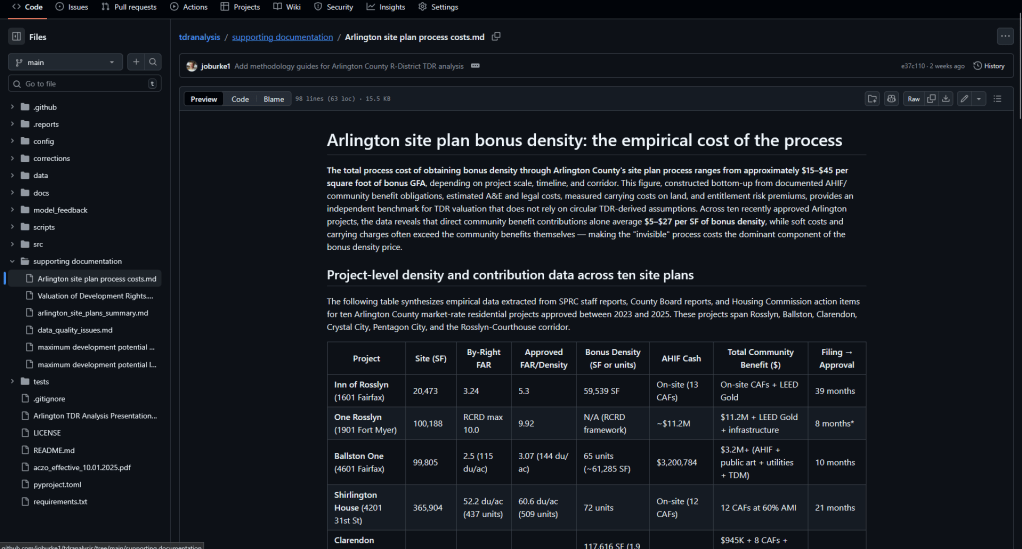

A key question for the analysis rests on the cost per square foot of the development rights. This isn’t a number you can look up. Claude was key in diving into the research and synthesizing evidence. In the end, on the demand side, I was able to compile a research appendix that I don’t think publicly existed until I pulled it together. Again, here is where the collaboration was key. Based on my domain knowledge, I knew the data existed and where to get it, but if I had to do it manually it would have taken weeks. With Claude I accomplished it in an afternoon. What resulted is the first (to my knowledge) publicly available estimate of the cost of Arlington County’s site plan process.

Publish and iterate?

An engineering colleague of mine recently asked me for advice on when he should release the project that he’s working on and I parroted the Reid Hoffman quote “If you aren’t embarrassed by the first version of your product, you shipped too late.” I think I’m right on time. I worked through a number of data quality and discrepancy issues before releasing, but I keep finding issues that I need to address. I see parcels that are obviously wrong as I click through the neighborhoods. I have nerves about the assumptions and estimation methods being wrong in obvious ways to people with real domain knowledge on real estate. But I’ll never get that feedback if I don’t put it out in the world and ask folks to take a look.

Inherent in publishing version 1 is the possibility of a version 2. On my roadmap is better data sources and using Claude to partially automate the evaluation and adjudication of feedback. Also, the website does not work as a stand-alone artifact. If I can get to the point where I’m getting feedback from people that I don’t know personally, then the website will need to include a better framework for orienting the user.

I’m picking up good vibrations

[It’s] giving me excitations. Claude that is. What I really did not expect was the emotional experience of using Claude code. It was fun, and addictive. The speed at which I was able to see substantive progress and results was remarkable. At first I would burn through my session limit in an hour or so and have to wait anxiously for my next fix. After a couple of days I was planning my routine around it. “Ok, I’ll schedule that meeting for 10:30 because I’ll have burned through my limits and need something to do until noon when it resets…” Even though I was moving faster than I could imagine, my backlog was growing even faster. Every time I learned a new technique, or tried a new agent and got more efficient, it would spawn 10x as many ideas of things I never would have even thought possible before. Fortunately this cycle of reward and withdrawal was broken by the weekend and life, and I returned the next week more prepared for the emotional roller coaster, but it was real. I do think this unexpected joy and delight adds to the hype cycle you’re seeing as folks start evangelizing. On the negative side, there is also the realization that there are a ton of smart people who are using these tools to go even faster and a foreboding sense that you may be falling behind. I don’t know if that’s true, but the feeling is there.

So what?

These tools are a productivity enhancement for software engineers and a real game changer for folks with technical understanding, but limited implementation experience. It will mean that software will be easier to produce, however it is also a new way of working. Adapting to, and becoming proficient and eventually skilled in this new way of working takes time. The tools do not come ready “out of the box.” I have to give a shout out to affaan-m and their everything Claude code project. It saved me months of trial and error. If you’re inclined to try this yourself, find some plugins from folks who have been doing this a while and spend a little time on project set up, and you’ll save yourself a lot of headache.

—